NEURAL SYSTEMS COMPUTATIONAL MODELS WHALE BIOACOUSTICS

NEURAL SYSTEMS INVOLVED IN DISCRIMINATION LEARNING

Humans and other animals classify environmental and internal information from the moment they are born. This process is dynamic. Individuals organize events differently depending on how long they have lived and what they have experienced. Scientists knew little about the dynamics of classification until Pavlov (1927) developed experimental

techniques for examining how dogs learn to distinguish events that predict food from those that do not. Pavlov's work had a profound influence on how scientists thought about the acquisition of learned classificatory responses. In particular, his findings led to an astonishing number of studies directed toward understanding how animals learn to discriminate environmental stimuli. Much of this research focused on describing how a stimulus becomes linked to the initiation or inhibition of a response. Less attention was given to examining how learning affected stimulus representations. Interestingly, although Pavlov's studies were specifically directed toward understanding the neural bases of discrimination learning, most subsequent work primarily has focused on explaining and predicting behavioral responses, and rarely has addressed neural mechanisms.

Humans and other animals classify environmental and internal information from the moment they are born. This process is dynamic. Individuals organize events differently depending on how long they have lived and what they have experienced. Scientists knew little about the dynamics of classification until Pavlov (1927) developed experimental

techniques for examining how dogs learn to distinguish events that predict food from those that do not. Pavlov's work had a profound influence on how scientists thought about the acquisition of learned classificatory responses. In particular, his findings led to an astonishing number of studies directed toward understanding how animals learn to discriminate environmental stimuli. Much of this research focused on describing how a stimulus becomes linked to the initiation or inhibition of a response. Less attention was given to examining how learning affected stimulus representations. Interestingly, although Pavlov's studies were specifically directed toward understanding the neural bases of discrimination learning, most subsequent work primarily has focused on explaining and predicting behavioral responses, and rarely has addressed neural mechanisms.

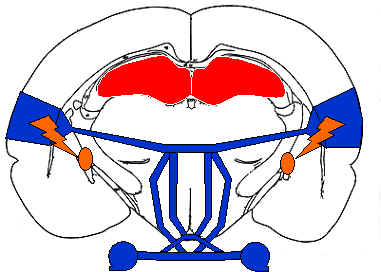

Neural systems thought to be involved in auditory discrimination learning include the cerebellum, the amygdala, the basal forebrain, the hippocampus, the basal ganglia, the ventral tegmental area, and of course, the auditory pathways from the cochlea to the auditory cortex. Clearly many neural systems play a role in discrimination learning, but how these systems interact to mediate increases in performance remains unclear. Behavioral, neuroimaging, and electrophysiological evidence suggests that how stimuli are represented in auditory cortex depends on experience, that auditory cortical representations remain plastic in adults, and that changes in how stimuli are represented can be induced in a variety of ways.

Hearing was the first sensory modality to be successfully rehabilitated using electrical neurostimulation. Electrical stimulation of auditory cortex facilitates experience-dependent shifts in auditory sensitivities as does stimulation of neuromodulatory neurons during the presentation of sounds. How such changes affect perceptual abilities is not yet known. Some researchers have found that experience-induced changes in cortical responses to pure tones may be uncorrelated with changes in frequency discrimination abilities. However, other experiments involving more complex sounds have shown robust correlations between changes in cortical sensitivities and increases in performance.

Clarifying the effects of neurostimulation and behavioral training on the perceptual encoding of acoustic events will help reveal how these techniques can best be used to remediate cortical deficits resulting from neurophysiological damage or dysfunction. Results from ongoing experiments can also contribute further to the creation of general theoretical descriptions and computational models of the neural systems involved in learning and memory. Understanding the neural bases of discrimination learning and how neurostimulation can be used to enhance the construction and maintenance of stimulus representations are the primary goals of this research project.

COMPUTATIONAL MODELS OF AUDITORY CORTICAL PROCESSING

Auditory signal processing can be characterized as an adaptive transformation from a one-dimensional space (corresponding to variations in pressure) into an n-dimensional auditory parameter space. This transformation can be modeled as a chirplet transform implemented via a self-organizing neural network. Computational techniques can provide insight into how neural circuits encode, store, and retrieve representations of acoustic events. The standard approach to modeling auditory cortical processing has been to start with a general signal processing model (e.g., Fourier or wavelet transforms), and then add on specialized processing components (e.g., matched filters) as necessary to reflect species-specific sensitivities. This approach is problematic because (1) the customization needed to describe cortical sensitivities in any given species can only be determined

post-hoc (i.e., the models are not predictive); (2) most evidence suggests that auditory cortical sensitivities reflect the particular needs of individuals faced with species-specific ecological and biological constraints, rather than generic acoustic signal processing strategies; and (3) changes in

auditory processing resulting from development, injury, or experience are not considered in these models. The chirplet transform subsumes both Fourier analysis and wavelet analysis (as well as several other classes of time-frequency analysis) as lower dimensional subspaces in the chirplet analysis space, providing a flexible framework for characterizing the full range of auditory cortical sensitivities observed in mammals.

Auditory signal processing can be characterized as an adaptive transformation from a one-dimensional space (corresponding to variations in pressure) into an n-dimensional auditory parameter space. This transformation can be modeled as a chirplet transform implemented via a self-organizing neural network. Computational techniques can provide insight into how neural circuits encode, store, and retrieve representations of acoustic events. The standard approach to modeling auditory cortical processing has been to start with a general signal processing model (e.g., Fourier or wavelet transforms), and then add on specialized processing components (e.g., matched filters) as necessary to reflect species-specific sensitivities. This approach is problematic because (1) the customization needed to describe cortical sensitivities in any given species can only be determined

post-hoc (i.e., the models are not predictive); (2) most evidence suggests that auditory cortical sensitivities reflect the particular needs of individuals faced with species-specific ecological and biological constraints, rather than generic acoustic signal processing strategies; and (3) changes in

auditory processing resulting from development, injury, or experience are not considered in these models. The chirplet transform subsumes both Fourier analysis and wavelet analysis (as well as several other classes of time-frequency analysis) as lower dimensional subspaces in the chirplet analysis space, providing a flexible framework for characterizing the full range of auditory cortical sensitivities observed in mammals.

The chirplet transform retains the advantages offered by time-frequency and wavelet transforms, and additionally provides a natural way for characterizing the different types of processing that have been described for different auditory fields (cortical regions with systematically related response sensitivities). Each auditory field can be viewed as a processor for decomposing sounds within a particular subspace of the complete auditory parameter space. In the current model, these fields correspond either to chirplet subspaces or to chirplet spaces generated by sets of functionally relevant basis functions. Chirplet spaces are highly overcomplete (redundant) because there are an infinite number of ways to segment a time-frequency plane. Because of this overcompleteness, the same acoustic feature may be encoded multiple times. Such overcomplete encoding corresponds well with the overlapping, parallel signal processing pathways observed in the mammalian auditory cortex.

Self-organizing maps are a type of neural network with a highly flexible architecture that can be easily customized. Self-organizing maps with biologically-based response characteristics can emulate the spatial organization of response properties observed in auditory cortex, as well as the competitive adaptation currently theorized to underlie changes in auditory cortical organization. As noted above, cortical representations of sound can be modified by repeatedly pairing presentation of a sound with electrical stimulation of neuromodulatory neurons. Stimulation-induced auditory plasticity can be simulated using parameters intrinsic to the self-organizing map such as the learning rate (controlling the adaptability of map nodes), and the neighborhood function (controlling the excitability of map nodes).

There are numerous ways to computationally model the processes involved in auditory perception and learning. Connectionist models are convenient tools for testing the adequacy of qualitative explanations of how brains process sound as well as for generating new hypotheses. Neural network and signal processing models are useful for instantiating process-level models of neural and cognitive functions, but less so for emulating neural circuits. My goal as a modeler is to provide a concise and precise account of how mammals represent acoustic events.

HUMPBACK WHALE BIOACOUSTICS

The humpback whale auditory system may be the most dynamic

acoustic processing system on the planet, and yet little is known about its

basic capabilities and even less is known about how it functions. Humpback

whales are best known for their singing behavior, which is believed by most

scientists to be a sexual advertisement display. The sounds that humpback

whales produce can provide important clues about humpbacks' auditory

capabilities. Biomimetic models of sound production and reception can be

derived from the acoustic features of humpback whale sounds. These models

can be used in combination with simulations of sound propagation in the ocean

environments frequented by humpback whales, to determine what types of

bioacoustic information are available for humpback whales to process. By

understanding how biology and environmental conditions constrain how humpback

whales perceive sounds, one can gain new insights into how they might use those

sounds. For example, simulations of humpback whale song transmission and

reception suggest that humpbacks can potentially use sounds within their songs

as low-frequency echolocation signals to detect and localize other whales at

ranges exceeding two kilometers. These simulations also indicate that song

sounds contain spectral information that could allow listening whales to

determine how far the sounds have traveled.

The humpback whale auditory system may be the most dynamic

acoustic processing system on the planet, and yet little is known about its

basic capabilities and even less is known about how it functions. Humpback

whales are best known for their singing behavior, which is believed by most

scientists to be a sexual advertisement display. The sounds that humpback

whales produce can provide important clues about humpbacks' auditory

capabilities. Biomimetic models of sound production and reception can be

derived from the acoustic features of humpback whale sounds. These models

can be used in combination with simulations of sound propagation in the ocean

environments frequented by humpback whales, to determine what types of

bioacoustic information are available for humpback whales to process. By

understanding how biology and environmental conditions constrain how humpback

whales perceive sounds, one can gain new insights into how they might use those

sounds. For example, simulations of humpback whale song transmission and

reception suggest that humpbacks can potentially use sounds within their songs

as low-frequency echolocation signals to detect and localize other whales at

ranges exceeding two kilometers. These simulations also indicate that song

sounds contain spectral information that could allow listening whales to

determine how far the sounds have traveled.

Humpback whales vary the acoustic features of song sounds, and the sequential structure of songs over time. Whales within a particular area appear to match their songs to the songs of other whales that they have heard singing. Because humpback whales are continuously changing the acoustic properties of their songs based on biologically-relevant acoustic events that they have experienced (i.e., songs produced by other whales), they must have highly flexible sound production capabilities, as well as exceptional auditory learning and memory skills. To be able to emulate spectrotemporally complex sounds that are novel and potentially distorted by propagation, humpback whales must possess an auditory system that can encode novel sounds precisely enough that they later can be reproduced. Few mammals other than humans and cetaceans have such sophisticated auditory processing capabilities.

What is it about humpback whales and humans that allows them to use sounds so flexibly? Surely it is their brains, but the specific neural and cognitive mechanisms that give rise to these unique abilities are not yet known. Comparative studies of auditory learning and plasticity provide a broad perspective from which to answer questions about how mammals process sound. My goal as a bioacoustician is to clarify how whales and dolphins produce, receive, and use sound, and to determine how similar the auditory processing techniques used by cetaceans are to those used by other mammals, including humans.